How Advanced Optics and Camera Modules Enable Autonomy, Analytics, and Resilient Farming

Smart agriculture increasingly depends on imaging systems to automate field monitoring, detect crop stress, identify pests, guide autonomous equipment, and generate precision analytics. The optical requirements span an unusually wide range: from wide-area drone survey lenses to close-range multispectral sensors, from RGB day cameras to NIR-enabled night inspection systems. No single off-the-shelf lens handles all of these applications — and agricultural field conditions are harsh.

This article covers the key imaging applications in smart agriculture, the optical performance parameters that matter for each, and how Sunex’s miniature lens and camera module portfolio addresses the needs of agricultural OEMs and integrators.

What imaging systems are used in smart agriculture and precision farming?

Smart Agriculture is rapidly evolving from connected equipment toward closed-loop, perception-driven systems that sense, decide, and act—at scale and at the edge. Imaging is at the center of this transformation. Cameras provide spatial context and plant-level insight that enable autonomy, analytics, and increasingly, direct yield optimization through precision intervention.

Modern agricultural imaging systems support a wide range of applications, from autonomous harvesting and machine guidance to aerial crop analytics, environmental intelligence, and facility automation. More recently, vision has become a key enabler of plant-specific action, including selective weed treatment, mechanical thinning or picking, and in-harvest crop counting and quality assessment. These applications deliver immediate economic value by reducing chemical inputs, lowering labor dependency, and improving yield consistency and traceability.

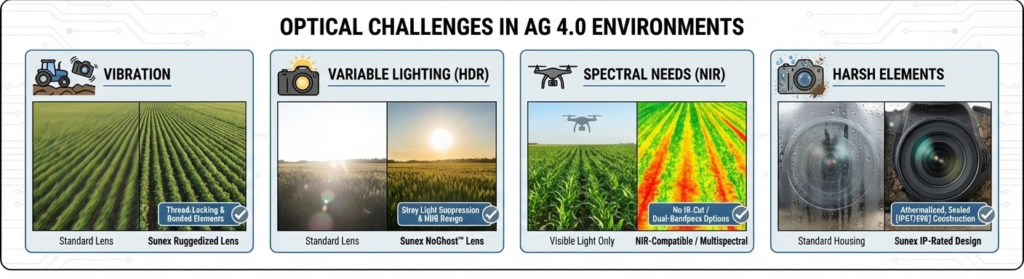

Deploying imaging successfully in agricultural environments requires more than selecting a sensor. Systems must perform reliably under extreme lighting variation, dust, moisture, vibration, and temperature swings—often for long operating hours with limited maintenance. Optical design, manufacturability, and camera module integration play a critical role in determining real-world performance, calibration stability, and scalability.

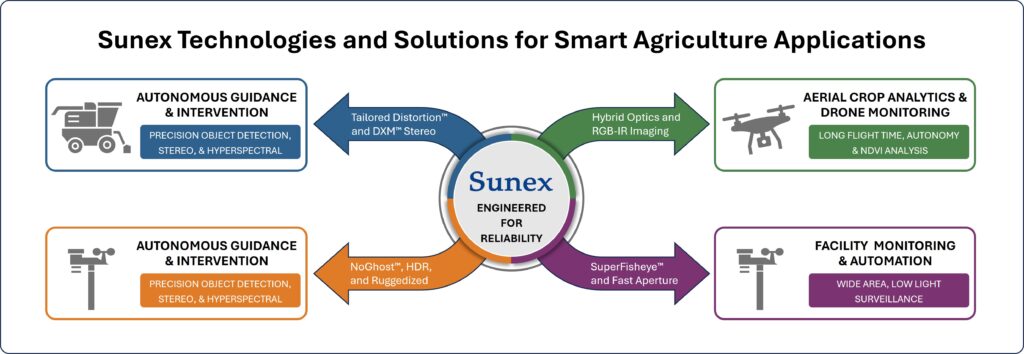

Sunex supports Smart Agriculture imaging across this full spectrum of applications through precision optics, robust wide-field designs, and advanced technologies such as DXM™ single-sensor stereo imaging. By enabling reliable depth perception, repeatable geometry, and production-ready camera modules, Sunex helps customers translate imaging performance into operational reliability, scalable deployment, and measurable yield improvement.

What optical requirements do agricultural drone cameras need?

Agriculture is simultaneously an outdoor robotics problem, an environmental sensing problem, and a logistics problem. The farm is not a controlled factory floor: lighting changes by the minute; airborne particulates fluctuate with wind and field operations; surfaces are irregular; and targets—plants—are living structures that change over days and weeks. Imaging provides the flexibility to handle this variability because it captures dense spatial information. Modern perception stacks transform pixels into actionable insights: navigation lines, crop health indices, fruit counts, weed segmentation, obstruction classification, and anomaly detection.

Yet cameras do not operate in isolation. Imaging performance is the product of:

- Optics (lens design, FOV, distortion, stray light control, focus stability)

- Sensor (pixel size, dynamic range, shutter type, NIR sensitivity)

- Illumination (sun and sky, artificial lighting, spectral characteristics)

- Mechanics (alignment stability, sealing, thermal behavior)

- Compute (ISP, edge inference, compression and streaming)

- Manufacturing (tolerances, repeatability, calibration strategy)

In agriculture, “good enough” optical choices often fail at the system level: a lens that looks fine in a lab can wash out in low sun glare, drift focus across temperature, or produce distortion that breaks row-detection geometry. Conversely, robust optical and module design reduces software complexity and improves model generalization, which directly impacts time to deployment and system reliability.

Sunex approaches this problem from an optics-first but system-aware standpoint: lens performance is developed alongside manufacturability, environmental stability, and integration constraints so camera systems can ship reliably at volume.

Cross-Cutting Requirements for Agricultural Imaging Systems

Lighting and Dynamic Range

Field conditions combine high contrast scenes (bright sky + shaded canopy) and strong specular reflections (wet leaves, irrigation water, plastic mulch, metal roofs). Cameras require:

- High dynamic range (HDR) capability (sensor + optics supporting it)

- Stray light and ghosting control in the lens to preserve contrast

- Optional RGB-IR or NIR sensitivity for dusk/dawn or vegetation analytics

How Sunex helps:

Sunex designs lenses optimized for contrast and environmental reliability, supporting imaging modalities that demand consistent performance under challenging illumination and across operating life.

FOV vs. Resolution Trade Space

Autonomous machines need wide coverage to see rows, edges, people, and obstacles, but analytics tasks often require high spatial detail to measure leaf-level features or detect disease patterns. This leads to multi-camera architectures with:

- Wide-FOV navigation cameras (including SuperFisheye(TM))

- Narrower-FOV inspection cameras (higher magnification / detail)

- DXM™ where stereo or dual-FOV is enabled on a single sensor

How Sunex helps:

Sunex offers an extensive off-the-shelf portfolio (including many M12-format options) and custom optical solutions that enable a wide range of applications.

Environmental Robustness

Dust, mud, fertilizer mist, cleaning chemicals, UV exposure, and temperature swings can degrade systems quickly. Key optical and module considerations:

- Sealing strategy and protective windows

- Coating durability and cleaning compatibility

- Focus stability versus temperature and mechanical stress

- Vibration and shock tolerance for off-road machinery

How Sunex helps:

Sunex emphasizes robust lens and module designs, fully athermalized systems with attention to environmental stability and repeatable assembly processes resulting in small part-to-part variance—supporting long-life deployment in harsh settings.

Calibration, Repeatability, and Scale

Agricultural autonomy and analytics depend on repeatable camera geometry. Inconsistent focal length, distortion, or optical axis alignment increases calibration burden and can degrade model performance across fleets.

How Sunex helps:

Sunex’s manufacturing and integration capabilities—such as precision assembly and fully automated 6-axis active alignment for camera modules—support consistent optical performance, enabling scalable calibration strategies and more predictable field performance.

Application Area 1: Autonomous Harvesting & Machine Guidance

Autonomous harvesting and machine guidance represent one of the most technically demanding vision applications in Smart Agriculture. Agricultural machinery must operate safely and accurately in open, unstructured environments where lighting, dust, terrain, and crop conditions vary continuously. Imaging systems provide the spatial understanding required for these machines to navigate crop rows, align headers and implements, coordinate with grain carts, and detect obstacles such as people, animals, or debris in real time.

Unlike factory automation, agricultural autonomy cannot rely on fixed markers or controlled surfaces. Vision algorithms must infer position and intent from natural features such as row geometry, canopy structure, stubble edges, and machine-to-crop relationships. This places a strong dependency on optical consistency. Lens field of view, distortion behavior, contrast performance, and focus stability directly affect how reliably perception algorithms perform across different fields, crops, and seasons.

Modern autonomous harvesting platforms typically employ a multi-camera architecture. Wide-field cameras provide situational awareness and navigation context, while more focused cameras monitor critical interaction zones such as headers, cutters, and implements. Increasingly, depth perception is added to improve machine control, safety, and robustness—particularly in scenarios involving uneven terrain, varying crop height, or dynamic interactions between multiple vehicles.

Stereo imaging is especially valuable in these use cases, enabling direct distance estimation and three-dimensional scene understanding without reliance on external infrastructure. Depth information improves obstacle detection, row height estimation, header positioning, and collision avoidance, while also reducing ambiguity in low-contrast or partially occluded scenes. Traditionally, stereo vision systems have required two separate cameras and a carefully controlled baseline, increasing system complexity, size, and calibration effort.

Sunex DXM™ technology addresses this challenge by enabling single-sensor stereo imaging, where two optical channels project spatially separated views onto a single image sensor. This approach delivers true stereo depth information while simplifying mechanical integration, synchronization, and manufacturing. For agricultural machinery, DXM™ offers a compelling balance between performance and robustness, reducing the alignment sensitivity and drift risks associated with dual-camera systems operating under vibration and thermal cycling.

From an optical standpoint, autonomous harvesting lenses—whether mono or stereo—must tolerate severe environmental stress while maintaining stable geometry. Low-angle sun, airborne dust, vibration, and temperature swings can all degrade image quality if optics are not specifically designed for these conditions. Optical performance, therefore, becomes a system enabler: better contrast, controlled distortion, and repeatable geometry directly reduce perception errors and software compensation overhead.

- Key imaging and optical requirements for autonomous harvesting include:

- Wide to ultra-wide fields of view for navigation and situational awareness

- Stable, repeatable distortion characteristics to support calibration and depth estimation

- High contrast and low flare performance in sun-facing and dusty environments

- Robust mechanical and thermal stability for long operating hours

- Optional stereo or depth capability to enhance safety and precision control

How does Sunex advance autonomous harvesting and machine guidance?

Sunex supports autonomous agricultural platforms through a combination of wide-FOV optics, manufacturable lens designs, and advanced stereo imaging capabilities. Sunex DXM™ single-sensor stereo technology enables compact, robust depth perception well-suited for OHV (Off-Highway Vehicles) machinery, reducing system complexity while improving spatial awareness. Combined with Sunex’s focus on production consistency and camera module integration, these capabilities help customers deploy scalable, reliable vision systems that perform consistently across fleets and operating seasons.

Application Area 2: Precision Crop Intervention & Yield Optimization

Precision crop intervention represents one of the most direct and measurable ways imaging systems improve agricultural outcomes. Unlike navigation or large-scale analytics, these applications operate at the individual plant level, where decisions translate immediately into reduced input costs, improved yield, and higher crop quality. Imaging enables machines not only to observe crops, but to act selectively and intelligently—treating the right plant, at the right time, in the right way.

Typical use cases include automated weed detection and selective spraying, mechanical weed removal or thinning, targeted disease or nutrient treatment, and crop counting or grading during harvesting. These systems are often deployed on sprayers, cultivators, and harvesters, where cameras are mounted close to the crop canopy or directly adjacent to tools such as spray nozzles, cutters, or picking mechanisms. As a result, imaging requirements differ significantly from those used for navigation or aerial monitoring.

Precision intervention systems demand high spatial resolution at close working distances, along with extremely low latency. Vision algorithms must detect, classify, and localize plants or weeds in real time—often at vehicle speeds—while maintaining consistent performance under variable lighting, dust, and vibration. Optical performance is therefore tightly coupled to actuation accuracy: any uncertainty in image geometry or depth estimation can lead to missed treatments, crop damage, or wasted chemicals.

One of the key challenges in these applications is separating crops from weeds in dense or overlapping vegetation. This is especially difficult in later growth stages, where occlusion and varying plant height introduce ambiguity in two-dimensional imagery. Here, depth perception becomes a major advantage, enabling machines to distinguish plant structures spatially and to target interventions more accurately.

Stereo imaging plays an increasingly important role in this context. Depth information improves weed discrimination, tool positioning, and spray targeting by providing three-dimensional context that complements semantic classification. However, traditional dual-camera stereo systems add complexity, size, and calibration sensitivity—challenges that are amplified when cameras are mounted near moving tools and exposed to vibration and debris.

Sunex DXM™ single-sensor stereo technology is particularly well-suited for precision crop intervention. By projecting two spatially separated views onto a single image sensor, DXM™ delivers true stereo depth while simplifying mechanical integration and synchronization. This approach reduces system size and alignment risk, making it easier to embed depth perception directly into tool-mounted camera systems. For applications such as depth-aware spraying, mechanical picking, or plant counting in dense canopies, DXM™ enables more robust and repeatable control at the point of action.

In addition to real-time intervention, imaging during harvesting enables yield measurement and validation at the moment of collection. Cameras mounted on harvesters can count fruit, ears, or plants, estimate size and quality, and correlate yield data with location and conditions in the field. This information closes the loop between treatment decisions earlier in the season and actual harvest outcomes, supporting continuous optimization across planting, treatment, and harvesting cycles.

Key imaging and optical requirements for precision crop intervention include:

- High-resolution imaging at short working distances

- Tight and repeatable distortion characteristics for accurate localization

- Low-latency image capture and processing for real-time actuation

- Robust performance under dust, vibration, and changing illumination

- Optional stereo or depth capability to resolve overlapping plants and control tool distance

How does Sunex advance precision intervention and yield optimization?

Sunex supports plant-level imaging applications through precision optics designed for controlled working distances, compact form factors, and consistent geometric performance. Combined with Sunex DXM™ single-sensor stereo technology, these solutions enable depth-aware targeting and counting while minimizing system complexity. Sunex’s focus on manufacturable designs and camera module integration helps customers scale precision intervention systems reliably across high camera counts and demanding agricultural environments, translating imaging performance directly into yield improvement and input efficiency.

Application Area 3: Aerial Crop Analytics & Drone Monitoring

Aerial imaging has become a cornerstone of precision agriculture, offering rapid, flexible insight across large areas that would be impractical to survey from the ground. Drones equipped with cameras are used to assess crop emergence, monitor plant health, identify stress patterns, evaluate irrigation effectiveness, and document storm or pest damage. Imaging allows growers and agronomists to detect issues early and respond with targeted interventions rather than broad, inefficient treatments.

For aerial analytics, image consistency and geometric accuracy are critical. Many use cases rely on orthomosaic generation, time-series comparisons, and quantitative measurements rather than simple visual inspection. As a result, optical performance must remain stable across flights, drones, and deployed fleets. Even small variations in focal length, distortion, or edge sharpness can introduce errors in stitching and analysis.

Drone imaging platforms also operate under strict constraints. Payload weight directly affects flight time, while vibration and rapid motion place additional stress on optics and sensors. Lighting conditions can shift dramatically within a single flight, from high noon sun to haze, cloud cover, or low-angle illumination near sunrise and sunset. Optics must deliver uniform sharpness and contrast across the image while minimizing flare and vignetting.

Most agricultural drone systems combine multiple imaging modalities (RGB-IR). High-resolution RGB cameras support visual interpretation and mapping, while NIR or multispectral systems enable vegetation indices and crop health analysis. In all cases, lens performance plays a central role in determining data quality and downstream analytics reliability.

Key imaging and optical requirements include:

- Lightweight lens designs to preserve flight endurance

- High and uniform sharpness across the full image field

- Controlled distortion for accurate mapping and orthomosaic generation

- Vibration tolerance and mechanical stability during flight

- Compatibility with RGB-IR, or multispectral sensing, as required

How does Sunex support aerial analytics?

Sunex provides compact, lightweight optical solutions optimized for embedded imaging platforms where mass and power efficiency matter. Its experience with wide-FOV and precision RGB-IR optics enables drone developers to balance coverage and resolution without compromising geometric reliability. Sunex’s emphasis on production repeatability further supports fleet-level deployment, ensuring that data captured by different drones remains comparable over time.

Application Area 4: Environmental Monitoring & Weather Intelligence

Environmental monitoring systems form the sensing backbone of modern farms, providing localized intelligence that complements regional weather forecasts and point-based sensors. Cameras integrated into weather stations, field masts, or mobile nodes add valuable visual context to measurements such as temperature, humidity, wind, and precipitation. Imaging can confirm cloud cover, visibility, fog formation, dust events, and storm conditions, enabling more informed operational decisions.

Unlike mobile platforms, environmental imaging systems are often expected to operate continuously for years with minimal maintenance. This places stringent requirements on optical durability and long-term stability. Lenses must resist UV exposure, temperature cycling, moisture ingress, and contamination while maintaining consistent focus and image quality. Any drift in optical performance can undermine the value of long-term data sets and trend analysis.

Visual environmental monitoring is increasingly used to validate and enrich sensor data. For example, imaging can confirm rainfall intensity, identify localized fog pockets, or visually document erosion and runoff after heavy storms. In some deployments, day/night imaging or RGB-IR configurations extend monitoring capabilities beyond daylight hours, supporting around-the-clock situational awareness.

Key imaging and optical requirements include:

- Long-term focus and alignment stability

- Resistance to UV exposure, moisture, and airborne contaminants

- Wide operating temperature range

- Optional support for low-light or day/night imaging modes

How does Sunex support environmental intelligence?

Sunex designs optics with environmental stability and durability in mind, supporting fixed outdoor installations that must perform reliably over long service lives. Through consistent optical performance and integration support for compact camera modules, Sunex helps enable scalable deployment of visual monitoring nodes across geographically distributed agricultural operations.

Application Area 5: Infrastructure Monitoring & Facility Automation

Smart Agriculture extends beyond fields and crops to include a wide range of supporting infrastructure, such as barns, grain storage facilities, processing areas, equipment yards, and perimeter zones. Imaging systems are increasingly deployed in these environments to improve operational efficiency, safety, and remote visibility. Cameras enable automated inspection, inventory monitoring, safety enforcement, and facility-level analytics, reducing manual labor and improving response times.

Facility environments introduce their own imaging challenges. Dust, humidity, low or uneven lighting, and the presence of moving machinery can degrade image quality if optics are not properly designed. In enclosed spaces, wide-field coverage is often needed to minimize camera count, while certain tasks—such as level monitoring in silos or belt inspection—require predictable geometry and sufficient detail.

In livestock or processing facilities, imaging can support automation and welfare monitoring while operating discreetly in constrained spaces. These applications often demand compact optical assemblies that can integrate cleanly into existing structures without interfering with daily operations.

Key imaging and optical requirements include:

- Reliable performance in low-light or mixed-lighting environments

- Wide-FOV lenses for situational awareness in large interiors

- Compact form factors for unobtrusive installation

- Resistance to dust, moisture, and cleaning agents

How does Sunex support facility automation?

Sunex offers compact, high-performance lens solutions suited for embedded monitoring systems used throughout agricultural infrastructure. By combining optical performance with manufacturable designs and module-level integration support, Sunex enables customers to deploy imaging systems that remain reliable in demanding indoor and semi-outdoor environments while scaling efficiently across multiple facilities.

Implementation Roadmap: From Concept to Deployable Imaging Systems

Define the Imaging Job-to-be-Done

For each application area, clarify:

- What is the decision/action driven by vision?

- What are the false-positive/false-negative costs?

- What is the required detection distance and accuracy?

- What operating hours and weather conditions must be supported?

These answers translate into quantitative optical requirements: FOV, resolution, sensitivity, distortion tolerance, and environmental constraints.

Choose the Right Optical Approach

A successful strategy often uses a mix:

- Wide-FOV lenses for navigation and coverage

- Narrower-FOV lenses for detail tasks

- Multi-camera arrays to reduce compromise

- Calibration strategy aligned with manufacturing repeatability

Sunex can support either selection from existing lens families or the development of custom optics that better match the application’s true constraints.

Integration and Scale Considerations

Moving from prototype to production requires:

- Optical performance that is achievable with real manufacturing tolerances

- Repeatable assembly and predictable alignment methods

- Mechanical design aligned with sealing and thermal stability

- A test strategy that verifies performance efficiently at volume

Sunex’s strengths in manufacturable optics and camera module integration are directly relevant here: reducing risk as products transition from “works on the bench” to “works in the field, across fleets.”

How do Sunex lenses support AI-based agricultural analytics systems?

Imaging is becoming the backbone of Smart Agriculture because it scales across autonomy, analytics, environmental intelligence, and facility automation. The value is clear: improved yield, reduced inputs, higher machine efficiency, better documentation, and faster response to stress and events. But achieving these outcomes at scale requires more than selecting a camera—it requires optics and integration engineered for the realities of agriculture: extreme lighting, harsh environments, vibration, long life, and wide deployment.

Sunex advances Smart Agriculture imaging by enabling robust optical performance and manufacturable designs that keep camera geometry consistent and reliable. Whether the goal is wide-FOV perception for autonomous harvesting, lightweight optics for drone mapping, rugged lenses for weather intelligence nodes, or compact cameras for facility automation, the same principle holds: better optics reduces system risk, shortens development cycles, and improves real-world performance.

What lens specifications matter for autonomous agricultural vehicle vision?

Turn imaging ideas into deployable systems—faster.

The Sunex Smart Agriculture Imaging Discovery Worksheet is a practical, fill-in framework designed to align product, engineering, and manufacturing teams early in the development process. It helps structure the right technical conversations before critical architecture decisions are made—reducing risk, rework, and time to deployment across autonomous machines, precision intervention systems, drones, environmental monitoring, and facility automation.

The worksheet is organized into focused tabs that guide discovery step by step:

- Quick Discovery – A one-page overview for early conversations, trade shows, or first technical calls.

- Application Overview – Defines the use case, platform, deployment scale, and timeline.

- Operating Environment – Captures real-world conditions such as dust, moisture, temperature, vibration, and ingress protection.

- Imaging Performance – Clarifies detection goals, resolution, latency, lighting, and spectral requirements.

- Optical Requirements – Translates system needs into field of view, distortion, working distance, and packaging constraints.

- Sensor & System – Aligns optics with sensor choice, compute platform, power, and synchronization needs.

- Calibration & Manufacturing – Addresses scalability, tolerances, cost sensitivity, and production readiness.

- Program Summary – Automatically consolidates inputs into a concise brief for internal alignment and next-step planning.

Whether you’re evaluating a new concept or preparing for production, the discovery worksheet helps teams move forward with clarity—grounding imaging decisions in real application needs and setting the foundation for robust, scalable solutions.